They Found a Way to Thematically Sort All of Wikipedia on a Laptop

Computer scientist David Blei, with co-authors Matthew Hoffman and Francis Bach, is recognized with a Test of Time Award at NeurIPS, the world’s top machine learning conference, for scaling his topic modeling algorithm to billions of documents.

No sooner had the internet put mountains of knowledge at our fingertips than a new problem emerged: finding what we needed. David Blei, a professor of computer science and statistics at Columbia, has helped us find those nuggets of gold with his statistical methods for organizing documents thematically, making it easier to search and explore massive bodies of text.

His topic modeling algorithm today is embedded in everything from spam filters to recommendation engines, but until a decade ago, topic modeling had limited reach, overwhelmed by datasets much larger than a few hundred thousand documents.

David Blei Explains How Topic Modeling Algorithms Work

In a landmark paper, Online Learning for Latent Dirichlet Allocation, Blei and his co-authors Matthew Hoffman and Francis Bach introduced a way to extend topic modeling to millions and billions of documents. Blei, Hoffman, and Bach were recently awarded a Test of Time Award for their work at the Neural Information Processing Systems (NeurIPS) conference.

“This paper took topic modeling, an extremely popular technique, and made it scalable to mammoth datasets,” said John P. Cunningham, an associate professor of statistics at Columbia who was not involved in the research. “They did it using stochastic optimization, a statistical technique that’s now so widely used it’s nearly impossible to imagine modern machine learning and AI without it."

"The magical thing about topic modeling is that it finds this structure without being given any hints or deep knowledge about English or any other language.”

Predicting Topics by Extracting Patterns Among Words

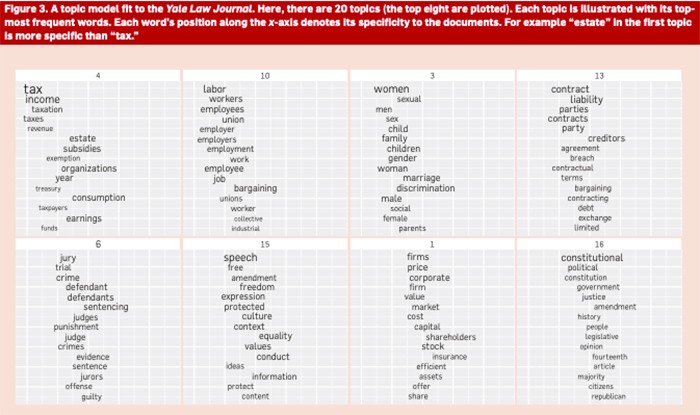

Blei developed topic modeling, a statistical technique he called Latent Dirichlet Allocation, or LDA, as a grad student at the University of California, Berkeley. LDA allowed an algorithm to ingest an archive of, say, news articles, and sort them into topics like sports, health, politics, and health without any prior knowledge of those subjects. The algorithm could extract broad themes by finding statistical patterns among the words themselves.

Topic modeling was as effective at parsing news stories as it was products; replacing documents with customers, for example, and words with products, the algorithm might discover “art supplies” as a topic, divided into themes like paint, paintbrushes, and colored pencils. “The magical thing about topic modeling is that it finds this structure without being given any hints or deep knowledge about English or any other language,” said Hoffman (SEAS ‘03) now a researcher at Google.

A Faster Way to Sift Through Massive Datasets

From its debut in 2003, topic modeling had an enormous impact. But as datasets grew larger, the algorithm struggled. Blei and Bach were discussing the problem one night at a bar, when they realized that stochastic optimization, an idea introduced decades earlier by statistics professor Herbert Robbins could provide a work around.

In a groundbreaking paper published in 1951, just before coming to Columbia, Robbins explained how an optimization problem could be solved by estimating the gradient through randomized approximation, a methodology now called stochastic optimization. The technique was later used to efficiently approximate gradients in a sea of data points. Rather than calculate the gradient precisely, using all of the data, the optimizer repeatedly samples a subset of the data to get a rough estimate much faster.

Stochastic optimization today underlies much of modern AI, enabling complex models like deep neural networks to quickly fit to massive datasets. Back in the early aughts, however, stochastic optimization was still finding its way into big models. At the time, Bach was using stochastic optimization to fill in pixels missing from images in computer vision models.

When Bach, Blei, and Hoffman, who was Blei’s grad student at the time, combined stochastic optimization and topic modeling, they discovered their algorithm could fit a model with millions of data points — be they New York Times stories, emails, or variants in thousands of human genomes. Stochastic optimization has been so pivotal in AI, said Blei, that four of the last five Test of Time papers at NeurIPS have hinged on it.

“The real test-of-time winner is Herb Robbins,” he said.

The ability to scale topic models soon led to another innovation: stochastic variational inference. In a highly cited followup paper, Hoffman and Blei, with co-authors Chong Wong and John Paisley, now a professor at Columbia Engineering, showed how stochastic variational inference could take the stochastic optimization algorithm they had applied to topic modeling and generalize it to a wide range of models.

Stochastic optimization plus variational inference has been used to scale recommendation systems and the analysis of social networks, genetic data, and other huge datasets. It has also inspired entirely new types of machine learning models, including deep generative models.

Online LDA was the "precursor not just to the stochastic variational inference paper, but to machine learning models that can learn analogs of ‘topics’ for data like images and robot motions,” said Jacob Andreas, a computer scientist at MIT who was not involved in the research. “What’s also always impressed me about this whole line of work on topic modeling is how many researchers in the humanities and social sciences are using it.”